Problema: Tenint en compte el procés I i J, heu d’escriure un programa que pugui garantir l’exclusió mútua entre tots dos sense cap suport de maquinari addicional.

Malbaratament de cicles de rellotge de la CPU

En termes laics quan un fil esperava el seu torn, va acabar en un bucle llarg que va provar la condició de milions de vegades per segon fent càlcul innecessari. Hi ha una manera millor d’esperar i es coneix com a 'Rendiment' .

Per entendre el que fa, hem de aprofundir en el funcionament del planificador de processos a Linux. La idea esmentada aquí és una versió simplificada del planificador La implementació real té moltes complicacions.

Penseu en l'exemple següent

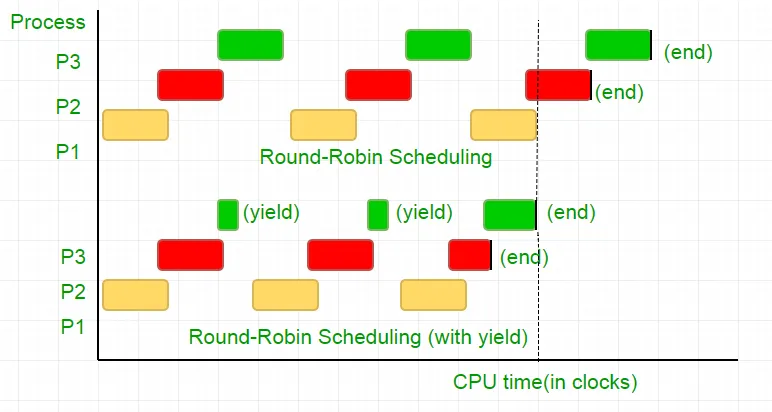

Hi ha tres processos P1 P2 i P3. El procés P3 és tal que té un bucle similar al del nostre codi que no fa un càlcul tan útil i existeix del bucle només quan P2 acaba la seva execució. El planificador els posa en una cua de Robin rodó. Ara diguem que la velocitat del rellotge del processador és de 1000000/seg i assigna 100 rellotges a cada procés en cada iteració. A continuació, primer P1 s’executarà durant 100 rellotges (0,0001 segons) i després P2 (0,0001 segons) seguit de P3 (0,0001 segons) ara, ja que ja no hi ha més processos que aquest cicle es repeteixi fins que P2 s’acabi i després segueixi l’execució de P3 i, eventualment, la seva finalització.

Es tracta d’un malbaratament complet dels 100 cicles de rellotge de CPU. Per evitar -ho, renunciem mútuament a la llesca de temps de la CPU, és a dir, el rendiment que acaba essencialment aquesta llesca de temps i el planificador recull el següent procés per funcionar. Ara posem a prova la nostra condició una vegada que renunciem a la CPU. Tenint en compte que la nostra prova fa 25 cicles de rellotge, estalviem el 75% del nostre càlcul en una llesca de temps. Per dir -ho gràficament

Tenint en compte la velocitat del rellotge del processador com a 1MHz, això és molt estalvi!.

Les diferents distribucions proporcionen una funció diferent per aconseguir aquesta funcionalitat. Linux proporciona programació_yield () .

void lock(int self) { flag[self] = 1; turn = 1-self; while (flag[1-self] == 1 && turn == 1-self) // Only change is the addition of // sched_yield() call sched_yield(); }

Tanca de memòria.

El codi del tutorial anterior podria haver treballat en la majoria de sistemes, però no era 100% correcte. La lògica era perfecta, però la majoria de les CPU modernes utilitzen optimitzacions de rendiment que poden donar lloc a una execució fora d’ordre. Aquesta reordenació de les operacions de memòria (càrregues i botigues) normalment passa desapercebuda dins d’un sol fil d’execució, però pot causar un comportament imprevisible en programes concurrents.

Considereu aquest exemple

while (f == 0); // Memory fence required here print x;

A l'exemple anterior, el compilador considera les dues declaracions com a independents les unes de les altres i, per tant, intenta augmentar l'eficiència del codi reordenant-les, cosa que pot comportar problemes per a programes concurrents. Per evitar -ho, posem una tanca de memòria per donar suggeriment al compilador sobre la possible relació entre les declaracions a través de la barrera.

Així doncs, l’ordre de les declaracions

Bandera [self] = 1;

gir = 1-jo;

Mentre (comprova la condició de gir)

rendiment ();

Ha de ser exactament el mateix per tal que el pany funcioni, en cas contrari, acabarà en un punt mort.

Per assegurar -se que els compiladors proporcionen una instrucció que impedeixi la comanda de declaracions a aquesta barrera. En cas de GCC it __sync_synchronize () .

Per tant, el codi modificat es converteix

Implementació completa a C:

// Filename: peterson_yieldlock_memoryfence.cpp // Use below command to compile: // g++ -pthread peterson_yieldlock_memoryfence.cpp -o peterson_yieldlock_memoryfence #include

// Filename: peterson_yieldlock_memoryfence.c // Use below command to compile: // gcc -pthread peterson_yieldlock_memoryfence.c -o peterson_yieldlock_memoryfence #include

import java.util.concurrent.atomic.AtomicInteger; public class PetersonYieldLockMemoryFence { static AtomicInteger[] flag = new AtomicInteger[2]; static AtomicInteger turn = new AtomicInteger(); static final int MAX = 1000000000; static int ans = 0; static void lockInit() { flag[0] = new AtomicInteger(); flag[1] = new AtomicInteger(); flag[0].set(0); flag[1].set(0); turn.set(0); } static void lock(int self) { flag[self].set(1); turn.set(1 - self); // Memory fence to prevent the reordering of instructions beyond this barrier. // In Java volatile variables provide this guarantee implicitly. // No direct equivalent to atomic_thread_fence is needed. while (flag[1 - self].get() == 1 && turn.get() == 1 - self) Thread.yield(); } static void unlock(int self) { flag[self].set(0); } static void func(int s) { int i = 0; int self = s; System.out.println('Thread Entered: ' + self); lock(self); // Critical section (Only one thread can enter here at a time) for (i = 0; i < MAX; i++) ans++; unlock(self); } public static void main(String[] args) { // Initialize the lock lockInit(); // Create two threads (both run func) Thread t1 = new Thread(() -> func(0)); Thread t2 = new Thread(() -> func(1)); // Start the threads t1.start(); t2.start(); try { // Wait for the threads to end. t1.join(); t2.join(); } catch (InterruptedException e) { e.printStackTrace(); } System.out.println('Actual Count: ' + ans + ' | Expected Count: ' + MAX * 2); } }

import threading flag = [0 0] turn = 0 MAX = 10**9 ans = 0 def lock_init(): # This function initializes the lock by resetting the flags and turn. global flag turn flag = [0 0] turn = 0 def lock(self): # This function is executed before entering the critical section. It sets the flag for the current thread and gives the turn to the other thread. global flag turn flag[self] = 1 turn = 1 - self while flag[1-self] == 1 and turn == 1-self: pass def unlock(self): # This function is executed after leaving the critical section. It resets the flag for the current thread. global flag flag[self] = 0 def func(s): # This function is executed by each thread. It locks the critical section increments the shared variable and then unlocks the critical section. global ans self = s print(f'Thread Entered: {self}') lock(self) for _ in range(MAX): ans += 1 unlock(self) def main(): # This is the main function where the threads are created and started. lock_init() t1 = threading.Thread(target=func args=(0)) t2 = threading.Thread(target=func args=(1)) t1.start() t2.start() t1.join() t2.join() print(f'Actual Count: {ans} | Expected Count: {MAX*2}') if __name__ == '__main__': main()

class PetersonYieldLockMemoryFence { static flag = [0 0]; static turn = 0; static MAX = 1000000000; static ans = 0; // Function to acquire the lock static async lock(self) { PetersonYieldLockMemoryFence.flag[self] = 1; PetersonYieldLockMemoryFence.turn = 1 - self; // Asynchronous loop with a small delay to yield while (PetersonYieldLockMemoryFence.flag[1 - self] == 1 && PetersonYieldLockMemoryFence.turn == 1 - self) { await new Promise(resolve => setTimeout(resolve 0)); } } // Function to release the lock static unlock(self) { PetersonYieldLockMemoryFence.flag[self] = 0; } // Function representing the critical section static func(s) { let i = 0; let self = s; console.log('Thread Entered: ' + self); // Lock the critical section PetersonYieldLockMemoryFence.lock(self).then(() => { // Critical section (Only one thread can enter here at a time) for (i = 0; i < PetersonYieldLockMemoryFence.MAX; i++) { PetersonYieldLockMemoryFence.ans++; } // Release the lock PetersonYieldLockMemoryFence.unlock(self); }); } // Main function static main() { // Create two threads (both run func) const t1 = new Thread(() => PetersonYieldLockMemoryFence.func(0)); const t2 = new Thread(() => PetersonYieldLockMemoryFence.func(1)); // Start the threads t1.start(); t2.start(); // Wait for the threads to end. setTimeout(() => { console.log('Actual Count: ' + PetersonYieldLockMemoryFence.ans + ' | Expected Count: ' + PetersonYieldLockMemoryFence.MAX * 2); } 1000); // Delay for a while to ensure threads finish } } // Define a simple Thread class for simulation class Thread { constructor(func) { this.func = func; } start() { this.func(); } } // Run the main function PetersonYieldLockMemoryFence.main();

// mythread.h (A wrapper header file with assert statements) #ifndef __MYTHREADS_h__ #define __MYTHREADS_h__ #include

// mythread.h (A wrapper header file with assert // statements) #ifndef __MYTHREADS_h__ #define __MYTHREADS_h__ #include

import threading import ctypes # Function to lock a thread lock def Thread_lock(lock): lock.acquire() # Acquire the lock # No need for assert in Python acquire will raise an exception if it fails # Function to unlock a thread lock def Thread_unlock(lock): lock.release() # Release the lock # No need for assert in Python release will raise an exception if it fails # Function to create a thread def Thread_create(target args=()): thread = threading.Thread(target=target args=args) thread.start() # Start the thread # No need for assert in Python thread.start() will raise an exception if it fails # Function to join a thread def Thread_join(thread): thread.join() # Wait for the thread to finish # No need for assert in Python thread.join() will raise an exception if it fails

Sortida:

Thread Entered: 1

Thread Entered: 0

Actual Count: 2000000000 | Expected Count: 2000000000